Meta's Adaptive Ranking Model: Revolutionizing Ads with LLM-Scale Inference Efficiency

Introduction

Meta has long been at the forefront of AI-powered recommendation systems, continually refining its approach to deliver enhanced user experiences and superior advertiser outcomes. The latest breakthrough—the Meta Adaptive Ranking Model—represents a significant leap forward by scaling runtime models for ads to the complexity and size of large language models (LLMs). This advancement promises deeper understanding of user interests and intent, but it also intensifies a core challenge known as the inference trilemma: balancing soaring model complexity with the low latency and cost efficiency required for a global service supporting billions of people.

The Inference Trilemma and Adaptive Routing

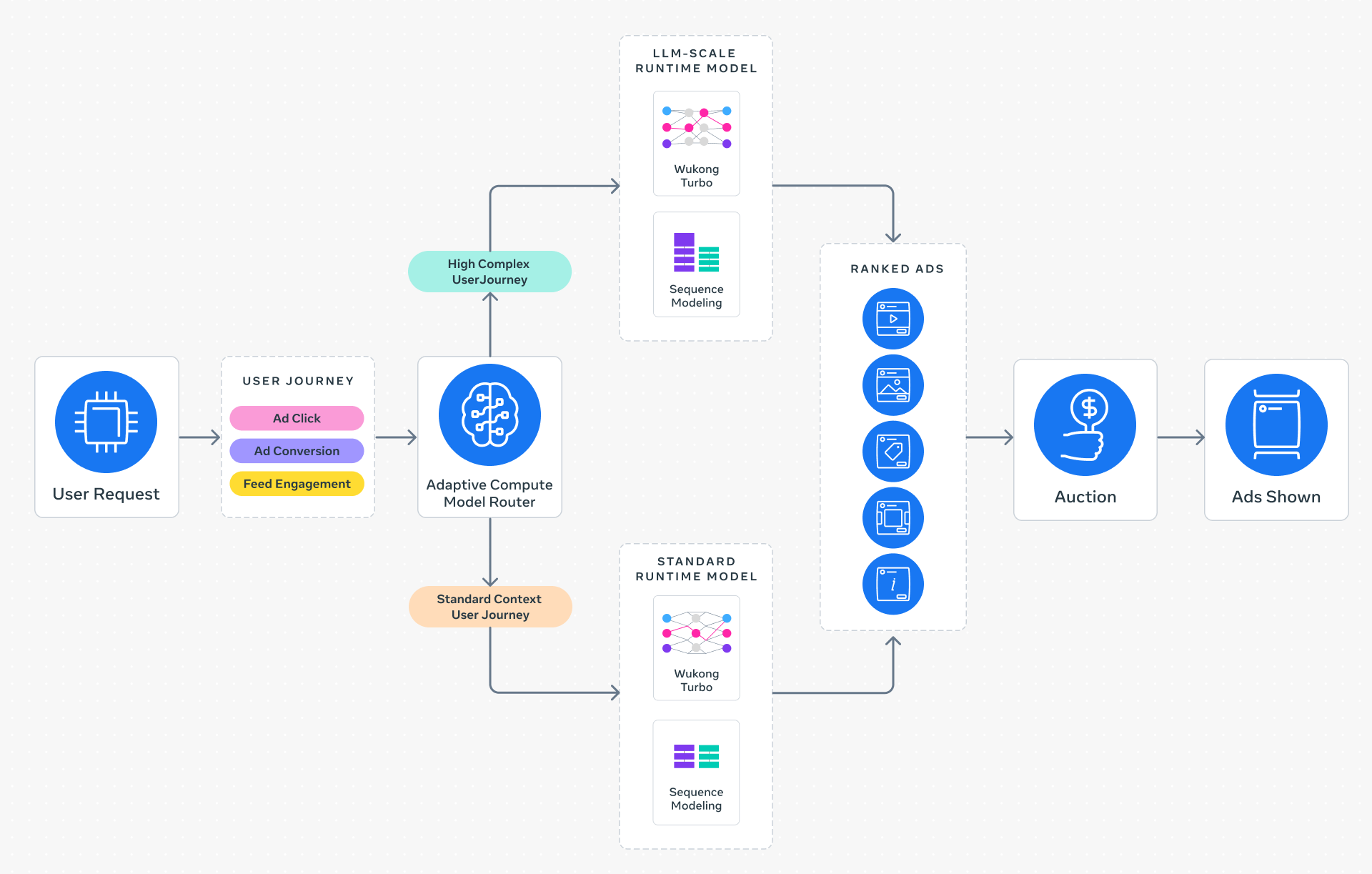

Traditionally, recommendation systems employed a one-size-fits-all inference approach, applying the same computational effort to every request. This becomes unsustainable when models reach LLM scale, where even a single query can demand enormous compute and memory. Meta's Adaptive Ranking Model tackles this by introducing intelligent request routing. Instead of treating all requests equally, the system dynamically matches model complexity to a rich understanding of each user's context and intent. This ensures that every ad request is served by the most effective yet efficient model, maintaining the strict sub-second latency that the platform depends on while delivering a high-quality experience for every person.

Three Key Innovations Powering the Shift

Delivering LLM-scale models at Meta's global scale required a fundamental rethinking of the entire inference stack. The Adaptive Ranking Model rests on three pivotal innovations:

1. Inference-Efficient Model Scaling

By shifting to a request-centric architecture, the Adaptive Ranking Model can serve an LLM-scale model with sub-second latency. This allows for a more sophisticated analysis of a person's interests and intent without compromising the user experience. The architecture prioritizes computational resources only where they are needed, rather than applying uniform processing to all inputs.

2. Model/System Co-Design

Meta developed hardware-aware model architectures that deliberately align model design with the capabilities and limitations of the underlying hardware and silicon. This co-design strategy significantly improves hardware utilization across heterogeneous environments—from CPUs to specialized accelerators—ensuring that every compute cycle is used effectively.

3. Reimagined Serving Infrastructure

Leveraging multi-card architectures and hardware-specific optimizations, the serving infrastructure now supports O(1T) parameter scaling. This enables Meta to serve LLM-scale runtime recommendation models with unprecedented efficiency, breaking through previous capacity barriers while keeping operational costs under control.

Real-World Impact and Results

The integration of LLM-scale intelligence into Meta's ads stack has already yielded measurable gains. Since launching on Instagram in Q4 2025, the Adaptive Ranking Model has delivered a +3% increase in ad conversions and a +5% increase in ad click-through rate for targeted users. These improvements translate directly into higher advertiser value without inflating the overall computational footprint—a powerful demonstration that scaling model size does not have to come at the cost of efficiency.

For businesses of all sizes, this means more relevant ad placements, better engagement metrics, and a higher return on investment. The ability to dynamically allocate computational resources also ensures that the system can continue to grow without overwhelming the underlying infrastructure.

Conclusion

Meta's Adaptive Ranking Model effectively bends the inference scaling curve, resolving the tension between model complexity and system efficiency that has long constrained recommendation systems. By replacing the rigid one-size-fits-all model with adaptive, context-aware routing, Meta has unlocked the potential to serve LLM-scale ad models in real time. The results—greater conversions, higher click-through rates, and maintained latency—underscore a new paradigm in AI-driven advertising. As Meta continues to refine these techniques, the impact on both user experience and advertiser outcomes is likely to deepen, setting a new industry standard for scalable, intelligent recommendation systems.