7 Key Insights into Meta's Adaptive Ranking Model for LLM-Scale Ad Serving

Meta has long been at the forefront of AI-driven recommendation systems, constantly pushing boundaries to enhance user experiences and advertiser results. The latest leap involves scaling their Ads Recommender runtime models to the complexity and scale of Large Language Models (LLMs). This ambitious move introduces a critical challenge: the inference trilemma—balancing skyrocketing model complexity, strict latency demands, and cost efficiency for billions of users. To solve this, Meta developed the Adaptive Ranking Model (ARM), a system that intelligently bends the inference scaling curve. This article breaks down the seven essential things you need to know about this groundbreaking technology, from its core innovations to real-world performance gains.

- The Inference Trilemma and How ARM Breaks the Trade-Off

- Replacing One-Size-Fits-All with Intelligent Request Routing

- Inference-Efficient Model Scaling with a Request-Centric Architecture

- Hardware-Aware Model/System Co-Design

- Reimagined Serving Infrastructure for O(1T) Parameter Models

- Real-World Impact: Measurable Gains in Conversions and CTR

- What This Means for the Future of AI-Powered Advertising

1. The Inference Trilemma and How ARM Breaks the Trade-Off

Deploying LLM-scale models in real-time ad recommendation systems forces a difficult balancing act. On one side, greater model complexity and size lead to deeper understanding of user intent and better ad relevance. On the other, every millisecond of latency impacts user experience, and compute costs must be sustainable for a global service with billions of daily requests. This is the inference trilemma: you cannot simultaneously maximize complexity, minimize latency, and keep costs low. Meta’s Adaptive Ranking Model directly addresses this by dynamically adjusting model complexity per request instead of using a single, monolithic model. By intelligently routing requests to the most appropriate model variant, ARM effectively bends the inference scaling curve, delivering high performance without sacrificing efficiency or user experience.

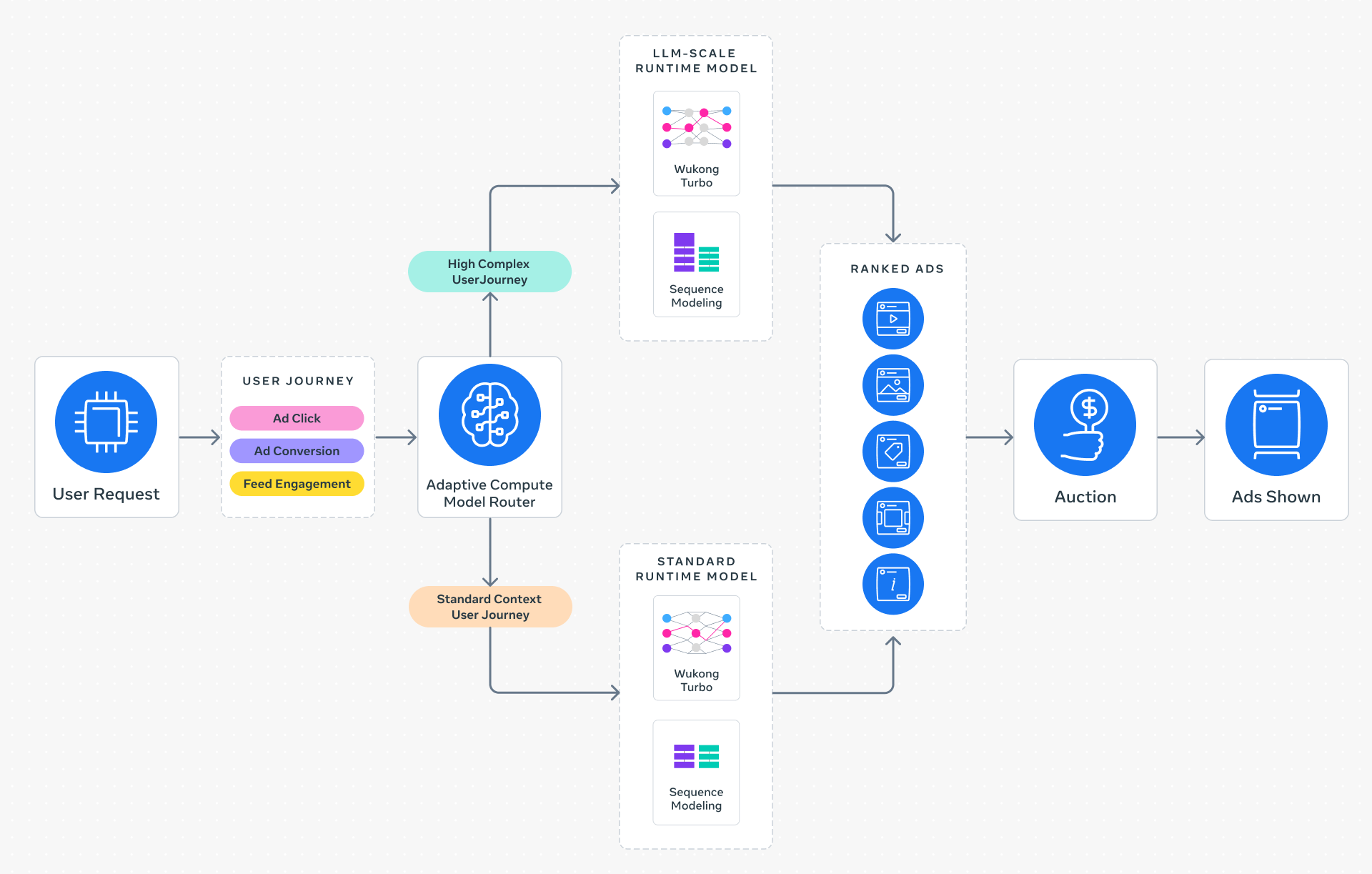

2. Replacing One-Size-Fits-All with Intelligent Request Routing

Traditional serving approaches apply the same model complexity to every request, often over-provisioning for simple queries and under-serving complex ones. ARM replaces this with a dynamic system that evaluates each request in context. It considers factors such as user engagement history, device type, session context, and estimated conversion value to determine the optimal model depth. Simple requests are handled by lightweight models, freeing computational resources for complex requests that benefit from the full LLM-scale model. This request-centric routing ensures that every ad impression is served by the most effective and efficient model variant, maintaining sub-second latency across the board. The result is a system that scales gracefully, providing high-quality experiences for all users while optimizing compute spend.

3. Inference-Efficient Model Scaling with a Request-Centric Architecture

To serve LLM-scale models at Meta’s traffic volume, the inference stack had to be fundamentally rethought. ARM introduces a request-centric architecture where the model adapts its computation path based on the input complexity. Instead of executing the full model graph for every request, it employs conditional computation—skipping unnecessary layers or experts when simpler reasoning suffices. This reduces the average inference cost dramatically while still allowing the model to tap into its full capacity for hard cases. The architecture also leverages early-exit mechanisms and knowledge distillation to maintain accuracy. By focusing compute where it matters most, ARM enables Meta to deploy models with hundreds of billions of parameters—approaching LLM scale—without exceeding strict latency budgets. This innovation is key to scaling deep understanding of user interests without degrading the real-time experience.

4. Hardware-Aware Model/System Co-Design

Meta’s infrastructure comprises heterogeneous hardware, including CPUs, GPUs, and custom accelerators (e.g., Meta’s own MTIA chips). Achieving high utilization across such diverse environments requires close alignment between model architecture and hardware capabilities. ARM’s development involved co-designing model structures with the underlying silicon in mind. For example, attention mechanisms are optimized for memory bandwidth characteristics of different accelerators, and layer dimensions are chosen to maximize parallelism on multi-card deployments. This co-design approach minimizes data movement and reduces idle cycles, boosting throughput per watt. By understanding hardware bottlenecks—such as memory hierarchy, interconnect speeds, and compute unit limitations—ARM achieves significantly higher hardware efficiency than generic LLM serving solutions. This efficiency is essential for cost-effectively serving LLM-scale RecSys models at Meta’s planetary scale.

5. Reimagined Serving Infrastructure for O(1T) Parameter Models

Serving models with trillions of parameters (order 1 trillion) demands a radical overhaul of the serving infrastructure. ARM leverages multi-card architectures where model shards are distributed across multiple accelerators, and inference requests are processed in parallel using pipeline parallelism and tensor parallelism. Customized kernel implementations—optimized for Meta’s hardware—reduce communication overhead between cards. The system also employs intelligent caching of intermediate activations and precomputed embeddings to skip redundant computations. This reimagined serving stack allows ARM to handle the memory footprint and compute demands of O(1T) parameter models while maintaining real-time responsiveness. The infrastructure is designed to be horizontally scalable, automatically adding accelerator resources as traffic grows. This ensures that as models grow more sophisticated, the cost per inference remains manageable, enabling relentless progress in ad relevance.

6. Real-World Impact: Measurable Gains in Conversions and CTR

Since its launch on Instagram in the fourth quarter of 2025, ARM has delivered tangible improvements for advertisers. For users selected for the adaptive routing, Meta observed a +3% increase in ad conversions and a +5% increase in ad click-through rate (CTR). These gains are even more pronounced for high-intent queries where the full LLM-scale model is applied. Importantly, these uplift numbers were achieved without increasing overall system latency—ARM maintained the sub-second response times that users expect. The system also improved computational efficiency across the board, meaning that the gains did not come at the cost of higher infrastructure spend. For businesses of all sizes, from small advertisers to large enterprises, ARM translates directly into better return on ad spend (ROAS) and more relevant ad experiences for users. This real-world validation underscores the practical value of bending the inference scaling curve.

7. What This Means for the Future of AI-Powered Advertising

Meta’s Adaptive Ranking Model represents a paradigm shift in how large-scale AI models are deployed for real-time applications. By solving the inference trilemma, it opens the door to applying ever-larger models in latency-sensitive environments like ads, search, and recommendations. The key lesson is that model size alone is not the bottleneck—it is the serving architecture that must evolve. ARM’s approach of intelligent routing, hardware-aware design, and request-centric computation can be extended to other domains such as organic ranking, feed personalization, and even real-time language models. For advertisers, this means continued improvements in targeting accuracy and campaign performance. For the industry, it sets a new benchmark for efficiency at scale. As AI models grow, innovations like ARM will be essential to ensure that the benefits reach users and businesses without crippling infrastructure costs.

In conclusion, Meta’s Adaptive Ranking Model is not just a technical achievement—it is a strategic enabler for the next generation of ad systems. By dynamically matching model complexity to request needs and co-designing with hardware, Meta has bent the inference scaling curve. The result is a win-win: better ad performance for advertisers and a seamless, relevant experience for users. As the line between recommendation systems and LLMs continues to blur, ARM provides a blueprint for serving intelligence at planetary scale.